What the biggest AI announcement at CES 2026 means for physical industries Physical AI Gets Its...

What Are World Foundation Models?

A technical primer on the AI systems learning physics instead of just patterns

NVIDIA Cosmos: Physical AI Gets Its Platform

NVIDIA announced Cosmos Reason 2 and Predict 2.5 at CES last month. If you missed it between the humanoid robot demos and folding-laundry machines, here is why it matters: it signals where AI development is heading. Away from static tokens and toward interactive, high-fidelity world-to-world translation. And with Tencent's HY-World 1.5 in late 2025, followed by Google's Genie 3 and NVIDIA Cosmos in January, the industry finally crossed a key threshold: 24 frames per second real-time generation. We have moved from slideshow rollouts to playable framerates.

Within weeks of the announcement, Uber, Figure AI, Agility, Waabi, XPENG, and over a dozen other robotics and autonomous vehicle companies declared they were building on it. AWS published deployment architectures for running Cosmos on their infrastructure. The term "world foundation model" went from research paper vocabulary to industry talking point almost overnight.

This article explains what these systems actually are, how they differ from the large language models you already know, and where the technology stands today. For industry adoption and business implications, see "The Year of World Foundation Models."

Executive Summary

World foundation models (WFMs) represent a change in what we expect AI systems to learn. Instead of just spotting patterns in still images, text, or sensor readings, these systems are trained to model how a situation unfolds over time and how different actions can lead to different outcomes. The idea is similar to earlier foundation models in language and vision, but the focus is different. Rather than recognizing or generating content, WFMs aim to model how a world behaves. They can play out what might happen next, turn simplified descriptions of a situation into realistic-looking sensor views, and help compare different possible outcomes. In short, they are about modeling change and interaction, not just recognizing what is already there.

The key outcome of these models is that they can play out "what if" scenarios. Given a description of the current situation and a possible action or rule, they can explore what might happen next in several different ways. In real systems, this ability shows up in a few different ways. Some models can predict or generate what future scenes might look like. Others can take simplified inputs, such as maps, outlines, or simulated views, and turn them into sensor-like data such as camera feeds. Some are also used to check whether a situation is internally consistent, or to help compare different possible outcomes when planning.

This resembles how people think when working towards a goal, but only at the surface level. We do not fully understand how humans perform this kind of judgement and comparison ourselves, and these systems do not understand it either. They are imitating patterns found in data, not reasoning in a human sense, which is one reason building reliable systems of this kind is so difficult.

These models are still limited. They work best over short time spans and in controlled situations. Getting them to handle long stretches of time or the full complexity of the real world, especially when conditions change in unexpected ways, remains an open research challenge.

From Language Models to World Models

Early foundation models demonstrated what happens when a single large model is pre-trained on a broad slice of one modality and then adapted across many downstream tasks. This was first evident in language models, but the same pattern quickly extended to vision and multimodal systems where shared representations could be repurposed for classification, segmentation, retrieval, and generation across domains. These produce state-of-the-art results in those areas.

World foundation models extend this trajectory, but shift the emphasis away from producing outputs toward modeling dynamics. Rather than learning how text, audio, pixels, or other features co-occur, they aim to capture how environments evolve over time, how actions change state, and how physical constraints such as geometry, contact, and motion shape what can happen next.

World models are often described as systems capable of representing and simulating interactive environments, including spatial structure, agents, and physical processes, in a form that can be explored and manipulated. Research and industry descriptions converge on the same core idea: a learned internal model that supports prediction under action, rather than static recognition or one-shot generation.

This represents a subtle but very important shift. Whereas earlier foundation models primarily target semantic completion or content generation, world models are concerned with system state evolution. Their purpose is not to produce artifacts, but to maintain an internal representation of how a system behaves as inputs and actions change.

What "Foundation Model" Means in This Context

The first thing is to separate the notion of a foundation model from a world foundation model. A foundation model is a large pre-trained model designed to serve as a reusable base across many downstream tasks (Bommasani et al., 2021). Although the term emerged from language modeling, the concept itself is modality-agnostic. MAE, SAM, DINO and CLIP are examples of foundation models.

In this context, a world foundation model is a foundation model trained to learn how a world changes over time, rather than just recognizing patterns in static data. Instead of working with a small number of hand-defined states and rules, it maintains an internal notion of "state" that is continuously updated as new observations arrive and actions are taken. This idea has its roots in robotics, where systems must repeatedly sense the environment, decide what to do next, act, and then update their understanding based on the result. Earlier approaches, such as reinforcement learning, relied on many slow trial-and-error rollouts and carefully designed reward functions, which proved difficult to scale beyond narrow tasks.

Vision-language-action models (VLAs) addressed some of these limits by learning from large datasets that link images, instructions, and actions, allowing systems to choose actions based on supervised examples rather than sparse rewards. World foundation models extend this trajectory further by shifting the learning objective away from individual tasks or policies and toward learning the underlying dynamics of the environment itself, with state transitions inferred directly from data rather than explicitly programmed (Ha and Schmidhuber, 2018; Schrittwieser et al., 2020).

Five Criteria for World Foundation Models

A system qualifies as a world foundation model if it meets the following criteria:

-

It maintains an internal representation of state over time (explicit latent state or implicit recurrent/context state) sufficient to support temporally consistent rollouts (Ha and Schmidhuber, 2018).

-

It supports action-conditioned prediction, mapping state and action to future state (Schrittwieser et al., 2020).

-

It can perform rollouts under interventions: varying actions, constraints, or initial conditions to generate counterfactual trajectories (Johnson, 2025).

-

It is pre-trained across diverse environments or scenarios, rather than tuned to a single task or layout (Google DeepMind, 2025).

-

It remains adaptable through post-training or fine-tuning, consistent with the foundation model paradigm (Bommasani et al., 2021).

These properties distinguish world foundation models from task-specific simulators, perception systems, or control policies. Operationally, these criteria surface as three coupled interfaces:

Dynamics (rollouts): Action- or constraint-conditioned evolution of a latent state, enabling counterfactual futures.

Observation (rendering/translation): Mapping structured conditions (simulation, maps, depth, segmentation) into realistic sensor observations for training and testing.

Assessment (understanding): Interpreting goals and conditions, scoring fidelity or consistency, and supporting selection among candidate rollouts.

Different organizations emphasize different layers, but the generic concept is the same: a reusable simulator with multiple I/O surfaces.

How WFMs Differ From Pattern Matching

"Pattern matching" is often used as a catch-all criticism of LLMs and the like, but the distinction here is more concrete. Most machine learning systems are trained to map inputs to likely outputs based on statistical regularities in their data. Given an observation, they produce a label, a caption, or a plausible continuation.

World foundation models are trained with a different objective. They are meant to represent environments in a way that supports counterfactual questions: if an agent takes this action, what happens next?

Quanta Magazine describes a world model as a compact internal "snow globe" version of reality that an agent can use to evaluate predictions before acting (Johnson, 2025). Systems such as DeepMind's Genie demonstrate this idea by generating interactive environments that remain coherent under navigation and manipulation within short demonstrated interaction horizons (Google DeepMind, 2025).

Functionally, the difference is straightforward. Descriptive systems map observations to outputs. Predictive systems map state and action to future state. World foundation models are built around the latter, typically with additional interfaces for conditioning, rendering, and evaluation.

What World Foundation Models Are Trained On

Across current systems, the training pattern is recognizable. Models are trained to model sequences of world evolution, using objectives that predict or generate future observations and latent state trajectories, often under action or constraints. Data sources emphasize change over time, including large-scale video, simulation, multimodal sensor streams, and, in some cases, embodied interaction data. Data curation and tokenization are treated as core design problems rather than preprocessing details.

An important and still emerging signal comes from embodied interaction. In robotics settings, this includes proprioceptive state, force and torque measurements, contact events, and action traces collected during real interaction with the environment. These signals provide direct supervision over how actions change state, not just how the world looks. While most current systems still rely heavily on video and simulation, many research efforts treat embodied interaction as the long-term path to grounding learned dynamics in physical reality (Ha and Schmidhuber, 2018; Google DeepMind, 2025).

Who Is Building World Foundation Models?

Because world foundation models require large-scale spatiotemporal data, long-horizon training, and substantial compute, development is currently concentrated among a small number of well-resourced organizations:

|

Focus Area |

Players |

|

Learned interactive world generators |

DeepMind (Genie) |

|

Embodied simulation platforms |

Meta (Habitat 3) |

|

Learned simulation and sensor translation |

NVIDIA (Cosmos Predict/Transfer/Reason) |

|

Spatial world reconstruction and generation |

World Labs (Marble), Tencent (HY-World 1.5), Kling Video |

Together, these efforts illustrate both the promise of world models and the scale constraints that currently limit who can build them.

This landscape is changing fast. Tencent's HY-World 1.5 model was released December 18, 2025, and NVIDIA's Cosmos went open-source at CES just weeks later.

"Reasoning" Without Over-Claiming

In this context, the term reasoning is used in a narrow, operational sense. It refers only to observable behavior in which a system evaluates possible outcomes before acting, not to human-like cognition, introspection, or symbolic thought.

World models learn internal representations that model change, supporting planning via internal rollouts and counterfactual evaluation (Johnson, 2025). No appeal is made to explicit logic or symbolic rules. Any form of "reasoning" arises from learned dynamics and predictive structure rather than deliberate, human-like thought.

A complementary "world understanding" model can provide grounding, preference signals, and controllability: it interprets prompts and conditions, checks for inconsistencies, and can score or critique generated rollouts. This is not evidence of human-like introspection; it is an operational layer that improves alignment between intended scenarios and simulated outcomes.

Vision Foundations as the Substrate Beneath World Models

Advances in vision foundation models have stabilized perception in many settings, providing robust visual representations, promptable object structure, and language-conditioned interfaces across images and video (Caron et al., 2021; Kirillov et al., 2023; Ravi et al., 2024). These capabilities form the substrate beneath world models.

What remained unresolved is dynamics. Vision models, even when extended to video, are largely descriptive. They encode what is present, but not how environments evolve under action or how constraints accumulate over time. World foundation models start where pure vision stops, treating visual representations as inputs to the harder problem of learning transition structure.

Multimodal world modeling complicates this picture further. Language can function as a control signal, a source of goals, or a persistent constraint that unfolds over time. If linguistic context is part of the modeled state, then tokenization, alignment, and temporal consistency across modalities become central design problems. Many current systems still treat language as conditioning rather than as a co-evolving component of state. Fully multimodal world foundation models remain an open research problem.

What You Need to Know: From Demo to Deployment

World foundation models are no longer speculative. At the same time, they are not yet broadly production-ready in the way language or vision foundation models (including VLMs) have become.

They are, however, already being deployed in highly constrained settings such as synthetic data generation, simulation-based training, and closed-loop evaluation in robotics and autonomous systems. These are genuine deployments, but they operate within carefully bounded assumptions. Long-horizon stability, controllable interaction over extended time scales, and reliable operation in open-ended environments remain unsolved engineering problems.

In that sense, we are past proof-of-concept for constrained world modeling, and early in real deployment for very specific use cases. Progress is now limited less by the availability of ideas than by engineering reality: stability under feedback, control over long-horizon dynamics, data efficiency, and robustness under real-world variation. World foundation models already matter in environments where assumptions hold and safety margins are high, but their applicability narrows rapidly as those constraints are relaxed.

It is also worth noting that not all observers are convinced that today's systems yet deserve the name "world model." A recent Quanta Magazine survey of the field traces the idea back to Craik's original formulation, but argues that many contemporary efforts amount to large-scale multimodal training in the hope that coherent internal world representations will emerge implicitly.

In this view, systems trained on vast mixtures of text, video, and simulation data often learn collections of powerful heuristics rather than a single, consistent model of how the world works. These heuristics can perform impressively within familiar regimes, but tend to fail under even mild distribution shifts, where robustness would require a genuinely coherent internal state and transition structure. From this perspective, world models remain more an aspiration than a settled capability: widely believed to be necessary, actively pursued by major labs, but not yet clearly realized in a form that supports stable, long-horizon interaction in open-ended environments (Johnson, 2025).

If world models are meant to operate in reality, then constrained versions are not a limitation but the only sensible place to begin. Fully general world modelling would require capturing the underlying structure of reality itself, a problem that remains unresolved even in the natural sciences. By contrast, bounded environments, restricted interactions, and explicit assumptions make learning dynamics tractable and evaluation meaningful. Seen this way, today's successes are not failed attempts at generality, but necessary and deliberate engineering choices. World foundation models are advancing where the world can be simplified, parameterized, or partially closed; modelling open-ended reality remains an open research question rather than an imminent milestone.

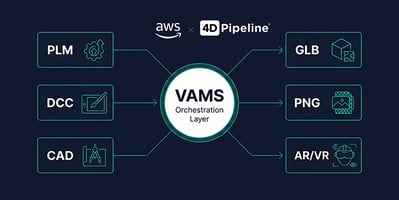

How 4D Pipeline Can Help

World foundation models require high-quality 3D environments to learn physics. With over 14 years of cross-platform graphics engineering experience, our team can help you evaluate whether your existing spatial data assets are ready for physical AI applications.

We have built pipelines across Unity, Unreal, WebGL, USD, and custom rendering backends, and we understand what it takes to move from visualization-quality 3D to simulation-ready environments. Whether your goal is to assess your digital twin readiness, build USD conversion workflows, or connect your 3D infrastructure to emerging AI platforms, we can help you translate WFM opportunities into practical next steps.

Already have a project in mind? Click here to schedule a consultation.

References

Foundational Research:

-

Ha, D., & Schmidhuber, J. (2018). World Models. arXiv:1803.10122. https://arxiv.org/abs/1803.10122

-

Schrittwieser, J. et al. (2020). Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model. Nature. https://doi.org/10.1038/s41586-020-03051-4

-

Bommasani, R. et al. (2021). On the opportunities and risks of foundation models. arXiv:2108.07258. https://arxiv.org/abs/2108.07258

Current Systems:

-

Google DeepMind. (2025). Genie 3: A new frontier for world models. https://deepmind.google/blog/genie-3-a-new-frontier-for-world-models/

-

NVIDIA. (2025). Cosmos: World foundation models for physical AI. https://www.nvidia.com/en-us/ai/cosmos/

-

NVIDIA. (2025). What are world models and how are they built? https://www.nvidia.com/en-us/glossary/world-models/

Industry Analysis:

-

Johnson, J. (2025). "World models," an old idea in AI, mount a comeback. Quanta Magazine. https://www.quantamagazine.org/world-models-an-old-idea-in-ai-mount-a-comeback-20250902/

-

Marr, B. (2025). The next giant leap for AI is called world models. Forbes. https://www.forbes.com/sites/bernardmarr/2025/12/08/the-next-giant-leap-for-ai-is-called-world-models/