When you don't have the CAD, or when as-built reality is what matters It's the biggest problem in...

Photogrammetry vs Gaussian Splatting in Production Workflows

Understanding when to use geometry-first reconstruction versus appearance-first capture

The progression from traditional photogrammetry to neural representations such as Neural Radiance Fields (NeRFs) and, more recently, Gaussian Splatting marks a fundamental shift in how we approach 3D reconstruction.

Until recently, neural reconstruction methods required research-grade infrastructure and specialized expertise. That's changed dramatically. You can now capture photorealistic, interactive 3D scenes using a Meta Quest headset or iPhone in under 5 minutes. This accessibility makes Gaussian Splatting the first neural method practical for production evaluation and deployment.

Three distinct approaches now tackle this challenge:

Photogrammetry operates through a geometry-first pipeline, reconstructing surfaces from overlapping images to produce metrically accurate meshes. The output is explicit geometry with baked textures.

Neural Radiance Fields (NeRFs) learn volumetric representations through neural networks. They predict color and density at any 3D point, achieving photorealistic view-dependent rendering. Modern implementations like Instant-NGP can reach 10-30 FPS. Tools like Luma AI use NeRF-based approaches.

Gaussian Splatting learns point-based representations that rasterize directly at 90+ FPS while maintaining NeRF-level quality. It achieves photorealistic appearance at interactive speeds, making it the first neural method practical for real-time production.

This article examines how these methods differ in practice and when to use each in production workflows.

For readers new to photogrammetry, refer to our earlier article "Building Digital Twins from Reality: The Photogrammetry Approach," for context on when and why to use reality capture methods.

A Brief Recap: Where Photogrammetry Took Us

Photogrammetry remains the cornerstone of reality-first digital twins. It begins with overlapping imagery and, through feature detection, matching and triangulation, reconstructs a measurable 3D surface.

Using Structure from Motion (SfM), we estimate both camera poses and a sparse point cloud. From there, Multi-View Stereo (MVS) densifies that cloud into detailed geometry, and algorithms such as Poisson Surface Reconstruction or Delaunay Triangulation transform the point set into a continuous mesh.

The textures mapped to that mesh are essentially baked and reprojected photographs. This means that illumination details such as highlights, shadows, and reflections are captured as part of the texture itself. While special capture setups using cross-polarized lighting can separate the albedo channel from shading, they still cannot recover full material properties such as specular response or dielectric behavior.

The result can be a beautifully accurate model of geometry. However, thin structures, glass, and shiny surfaces often confound reconstruction, and the lighting baked into the surface prevents true relighting or physically based rendering.

From Geometry to Radiance: Neural Radiance Fields

Neural Radiance Fields (NeRFs), introduced by Mildenhall et al. in 2020, changed how we think about 3D reconstruction. Instead of modeling surfaces explicitly, NeRFs learn a continuous function that maps any 3D point and viewing direction to a color and volumetric density.

In practice, NeRFs don't reconstruct a mesh. Instead, they learn a volumetric field that assigns each point in space a density and a color value. By casting rays through this field and accumulating samples along each ray, the network learns to reproduce the appearance of the scene from any viewpoint. The result behaves as if the model had learned how light exists within the scene, though what it truly captures is how radiance varies with position and direction.

The advantage is clear: view-dependent appearance and photometric accuracy. However, NeRFs are computationally expensive. Traditional implementations render through volumetric ray marching, integrating hundreds of samples per pixel. Even fast variants like Instant-NGP (Müller et al., 2022) achieve near real-time performance (10-30 FPS) on high-end GPUs but still fall short of the interactive rates needed for many production applications.

NeRF proved that radiance, not just geometry, could be reconstructed. Yet, for real-world use, the method remained slower than needed and too opaque to integrate into practical pipelines. Tools like Luma AI demonstrate the quality achievable with NeRF-based approaches, but the speed limitation remains.

NeRF Overview - overview of the neural radiance field scene and the rendering procedure [Source: (Mildenhall et al. 2020)]

Enter Gaussian Splatting

To understand how Gaussian Splatting differs, we need to understand what it actually produces. At its core, a trained Gaussian Splat is a point cloud, typically stored as a .ply file, where each point encodes parameters such as position, opacity, view-dependent color, shape and orientation. These attributes are used by the rasterizer to generate elliptical Gaussian splats that, when combined, recreate the visual characteristics of the scene.

The idea, introduced by Bernhard Kerbl et al. (2023), was deceptively simple: replace the implicit volumetric field of NeRF with an explicit, differentiable "cloud" of anisotropic 3D Gaussians. Each Gaussian carries a position, a covariance (defining its shape and orientation in space), an opacity value, and a set of spherical harmonic coefficients describing color that varies with view direction.

Gaussian Splat training process - Note that, for real-world cases, the SfM points and camera poses come from COLMAP (or COLMAP-like operations). In our case, we will also extract these points and poses from the original 3D scene. As illustrated in the training diagram from Kerbl et al. (2023) [Image Source: (Kerbl et al. 2023)].

Because Gaussians are explicit primitives, the rendering stage no longer depends on volumetric ray marching. Each Gaussian can be rasterized directly onto the image plane, producing photorealistic frames at interactive rates, now measured in frames per second rather than seconds per frame.

Visually, it's quite astonishing. The output resembles a probabilistic point cloud that coalesces into reality when rasterized. These Gaussians overlap and blend according to their opacity, approximating light transport through alpha compositing. The system achieves over 90 frames per second at 1080p while maintaining competitive image quality against state-of-the-art NeRFs such as Mip-NeRF 360 (Barron et al., 2022).

Here is such an example from Christoph Schindelar:

Image Credit https://superspl.at/view?id=c67edb74 by schindelar3d

The Key Distinction

The fundamental difference is this: Photogrammetry yields explicit, measurable geometry. Gaussian Splatting produces implicit, appearance-based representations that trade physical accuracy for perceptual realism and speed.

Let's examine what this means in practice across four critical dimensions: data preparation, representation, rendering and relighting, and integration.

1. Data Preparation

Photogrammetry

A successful photogrammetry capture depends on consistency: 60 to 80% image overlap, stable lighting, and sharp, well-exposed features. The images are processed through Structure from Motion to estimate camera poses and sparse geometry, then through Multi-View Stereo to generate dense depth maps. The full workflow (feature extraction, bundle adjustment, depth fusion, meshing, and texturing) can take hours depending on resolution and scene complexity, though tools are getting much better (Agisoft Metashape and Epic Game's RealityScan).

Gaussian Splatting

In Gaussian Splatting, the front end is almost identical. It still relies on SfM to compute camera poses and a sparse point cloud, often using COLMAP or similar open-source solvers. The key difference lies in what happens next: instead of densifying geometry through stereo matching, the algorithm initializes a field of Gaussians at those sparse points and learns to adjust their position, opacity, shape and color to reproduce the input images.

This training phase is conceptually similar to NeRF optimization but operates on explicit primitives rather than volumetric samples. Once trained, the model can be rendered directly without an intermediate mesh or texture bake.

Practical impact: Gaussian Splatting eliminates multi-view stereo and meshing, significantly reducing the time between image capture and interactive visualization. The trade-off is that the result is a radiance field, not a metrically precise surface (suitable for visualization, not for measurement).

2. Representation

Photogrammetry

The final output is a polygonal mesh with a texture map. This makes it compatible with all standard DCC tools and real-time engines (see how CD PROJEKT RED used RealityScan to help create The Witcher 4 Unreal Engine 5 Tech Demo). The geometry is explicit, measurable, and editable, but surface complexity can balloon file sizes. Mesh decimation or retopology is often needed before export, and high-resolution textures can reach gigabytes in size.

Gaussian Splatting

The scene is stored as an unstructured set of anisotropic Gaussian primitives, each with spatial covariance, opacity, and view-dependent color coefficients. There is no mesh, no UVs, and no need for topology management. This representation is continuous in space but discrete in number (typically one to five million Gaussians for a medium-scale scene).

Because it encodes view-dependent color directly, it captures subtle light interactions such as reflections and parallax that a single texture cannot. However, since geometry is implicit, you cannot easily edit or measure it.

Practical impact: Gaussian Splatting trades topological control for appearance fidelity. For visualization, this means less pre-processing. For CAD or survey applications, it lacks the geometric precision of traditional photogrammetry.

3. Rendering and Relighting

Photogrammetry

Rendering photogrammetric models in real time is straightforward but limited. Textures carry baked lighting; shadows and specular highlights from the capture environment persist unless removed during processing. True relighting requires separating albedo, normals, and material parameters, something standard photogrammetry cannot provide without polarized or structured-light capture.

Gaussian Splatting

In Gaussian Splatting, lighting is inherently view-dependent. Each Gaussian carries a learned spherical harmonic function describing how color varies with direction. This means the model already encodes how light behaves when seen from different angles. While it is not physically based in the path-tracing sense, it allows relighting effects and dynamic exposure adaptation that appear natural and continuous.

The trade-off is that the lighting is intrinsic to the splat model. You cannot easily isolate albedo or roughness channels for conventional material workflows. Hybrid approaches, combining splats for view-dependent color with meshes for physically based materials, are an emerging research area.

Practical impact: Gaussian Splatting offers dynamic appearance and relighting potential out of the box but lacks explicit control over material parameters. Photogrammetry provides the opposite: controllable materials but baked illumination.

4. Integration and Toolchain Compatibility

Photogrammetry

Photogrammetry outputs integrate seamlessly with established ecosystems: Unreal Engine, Blender, Unity, and CAD platforms. Meshes can be UV-unwrapped, re-topologized, and shaded using physically based rendering (PBR) materials. This makes photogrammetry the preferred method in industries that depend on geometric accuracy (architecture, surveying, and manufacturing).

Gaussian Splatting

The format is younger but growing rapidly. The reference implementation from Inria and its derivatives export to standalone viewers such as Supersplat (browser-based). Integration into Blender, Houdini and engines like Unreal and Unity is under active development, often via plugins that rasterize splats as point-cloud-like geometry buffers or hybrid render passes.

From a pipeline perspective, splatting is closer to volumetric video than to traditional 3D assets. It shines in immersive viewing, virtual set capture, and cultural heritage visualization where interactivity and realism are more important than mesh editing.

Practical impact: Gaussian Splatting is moving toward production readiness (it was actually used in Dune 2 and James Gunn's Superman), particularly for shot planning and real-time immersive environments. For geometric interoperability, photogrammetry remains essential.

A Hybrid Future

For many workflows, the future is not one or the other. The two methods complement each other. Photogrammetry still provides the geometric backbone (camera poses, scale, and spatial reference). Gaussian Splatting can build on that data to deliver view-dependent realism and interactive playback.

The same image set can power both pipelines, with meshes used for measurement and splats used for rendering. In this sense, Gaussian Splatting represents not the replacement of photogrammetry, but its next layer: a way to move from geometry capture to light capture, from static representation to dynamic perception.

Managing Multiple Representations

Platforms like NVIDIA Omniverse are already built to handle this hybrid reality. Omniverse's USD-based architecture can manage photogrammetric meshes, NeRF volumes, and Gaussian Splat assets within the same scene, each referenced and composed based on use case. Your CAD geometry provides dimensional accuracy, photogrammetry captures as-built conditions, and Gaussian Splats deliver photorealistic visualization, all working together in a unified digital twin.

This isn't theoretical. Production teams are already building workflows where the same physical asset exists in multiple representations: a precise mesh for engineering analysis, a splat model for client presentations, and a NeRF capture for marketing visualization. The platform manages which representation loads based on the task at hand.

Summary Comparison

| Aspect | Photogrammetry | Gaussian Splatting |

|---|---|---|

| Input | Overlapping photos | Overlapping photos |

| Initial stage | Structure from Motion (SfM) | Structure from Motion (SfM) |

| Output | Mesh + texture map | Field of 3D Gaussians |

| Training/rendering | Geometric reconstruction, no learning | Gradient descent optimization on Gaussians |

| Runtime performance | Real-time once textured | Real-time (interactive rendering native) |

| Lighting | Baked-in texture illumination | View-dependent radiance (learned) |

| Relighting capability | Limited; requires albedo separation | Intrinsic view dependence, not PBR accurate (in the base method) |

| Geometry accuracy | Metrically precise | Approximate; not directly measurable |

| Editability | Fully editable geometry | Limited (no mesh topology in the basic version) |

| File size | Large (geometry + textures) | Compact (millions of small primitives) |

| Use cases | Surveying, CAD, game assets | Immersive visualization, volumetric video, digital twins (appearance-first) |

Practical Implications

The choice between photogrammetry and Gaussian Splatting comes down to your priorities:

Choose photogrammetry when:

- You need measurable, metrically accurate geometry

- CAD or BIM integration is required

- You need editable meshes for downstream workflows

- Material parameters need to be separated and controlled

- The application demands geometric precision over appearance

Choose Gaussian Splatting when:

- Visual fidelity and view-dependent realism are priorities

- Interactive, real-time visualization is essential

- Rapid turnaround from capture to viewing matters

- The application is appearance-driven (visualization, immersive experiences)

- You need natural handling of reflections and view-dependent lighting

Use both when:

- You need geometric accuracy for some workflows and appearance for others

- You're building digital twins that serve both measurement and visualization needs

- You want to maximize the value from a single image capture session

Current Tooling

The ecosystem for Gaussian Splatting has matured rapidly:

Training and Processing:

- GraphDeco 3D Gaussian Splatting (INRIA official repository)

- Postshot (Jawset Visual Computing): Production-ready suite (Commercial)

- LichtFeld Studio: Open implementation

Viewers and Integration:

- PlayCanvas Supersplat: Browser-based viewer/editor

- Unreal Engine plugins (e.g., XScene-UEPlugin):

- Adobe "Project New Depth": Photoshop-like editing with layers

Consumer-Accessible Tools:

- Meta Horizon Hyperscape: Scan rooms with Quest 3/3S in under 5 minutes, view in photorealistic VR

- Scaniverse (Niantic): Free iOS app for phone-based scanning, 1-3 minute capture time, view in VR via "Into The Scaniverse" web app

- Over 50,000 user-created splats already shared globally

This accessibility represents a significant shift. Traditional photogrammetry required understanding SfM pipelines, meshing algorithms, and texture baking. Gaussian Splatting can now be tested with consumer devices, making the technology immediately evaluable for pilot projects.

Conclusion

Gaussian Splatting doesn't replace photogrammetry outright; it extends it. SfM-derived camera poses remain the backbone of most pipelines. What changes is how we represent the result: from a mesh that stores shape and texture, to a field that stores radiance and appearance.

In that sense, Gaussian Splatting is not just another rendering technique. It's a shift in representation, from surfaces to probabilities, from geometry to perception. And in that shift, we may be witnessing the next logical step in how digital twins evolve: not as static reconstructions, but as living, view-dependent realities.

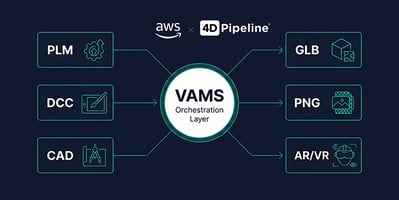

How 4D Pipeline Can Help

At 4D Pipeline, we help teams navigate the evolving landscape of 3D reconstruction technologies. Whether you're evaluating Gaussian Splatting for your visualization pipeline, building hybrid workflows that leverage both photogrammetry and neural methods, or optimizing existing reality capture processes, we combine deep technical expertise with practical production experience to deliver solutions that work in real-world contexts.

From workflow analysis and technology assessment through implementation and optimization, we guide you through the complexity of choosing and deploying the right reconstruction method for each use case.

Let's Connect:

References

Photogrammetry and Structure from Motion:- Schönberger, J. L., & Frahm, J.-M. (2016). Structure-from-Motion Revisited. In Conference on Computer Vision and Pattern Recognition (CVPR). https://ieeexplore.ieee.org/document/7780814

- COLMAP Documentation: https://colmap.github.io/

Neural Radiance Fields:

- Mildenhall, B., Srinivasan, P. P., Tancik, M., Barron, J. T., Ramamoorthi, R., & Ng, R. (2020). NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis. arXiv:2003.08934. https://arxiv.org/abs/2003.08934

- Barron, J. T., Mildenhall, B., Tancik, M., Hedman, P., Martin-Brualla, R., & Srinivasan, P. P. (2022). Mip-NeRF 360: Unbounded Anti-Aliased Neural Radiance Fields. arXiv:2111.12077. https://arxiv.org/abs/2111.12077

- Müller, T., Evans, A., Schied, C., & Keller, A. (2022). Instant Neural Graphics Primitives with a Multiresolution Hash Encoding. arXiv:2201.05989. https://arxiv.org/abs/2201.05989

- Kerbl, B., Kopanas, G., Leimkühler, T., & Drettakis, G. (2023). 3D Gaussian Splatting for Real-Time Radiance Field Rendering. ACM Transactions on Graphics (SIGGRAPH 2023), 42(4). https://arxiv.org/abs/2308.04079

- Official implementation: https://github.com/graphdeco-inria/gaussian-splatting

About this series: This is Part 2 of our exploration of 3D reconstruction methods, examining how appearance-based neural methods complement and extend traditional geometry-first photogrammetry approaches.